Does bioRxiv Fulfill Its Purpose?

Data reveal that putting preprints up for comment does nothing to change their fate

When I worked as a Managing Editor, a rule of thumb I learned from an editor was that about 1 in 3 papers aren’t worth publishing — anywhere. This rule of thumb has been reinforced over the years by nearly every frank interaction I’ve had with editors, by all the peer-reviews I’ve performed, and by various data points I’ve seen. There are various reasons some papers are bound for science and scholarship’s circular file, but again and again, the rule of thumb seems to hold up.

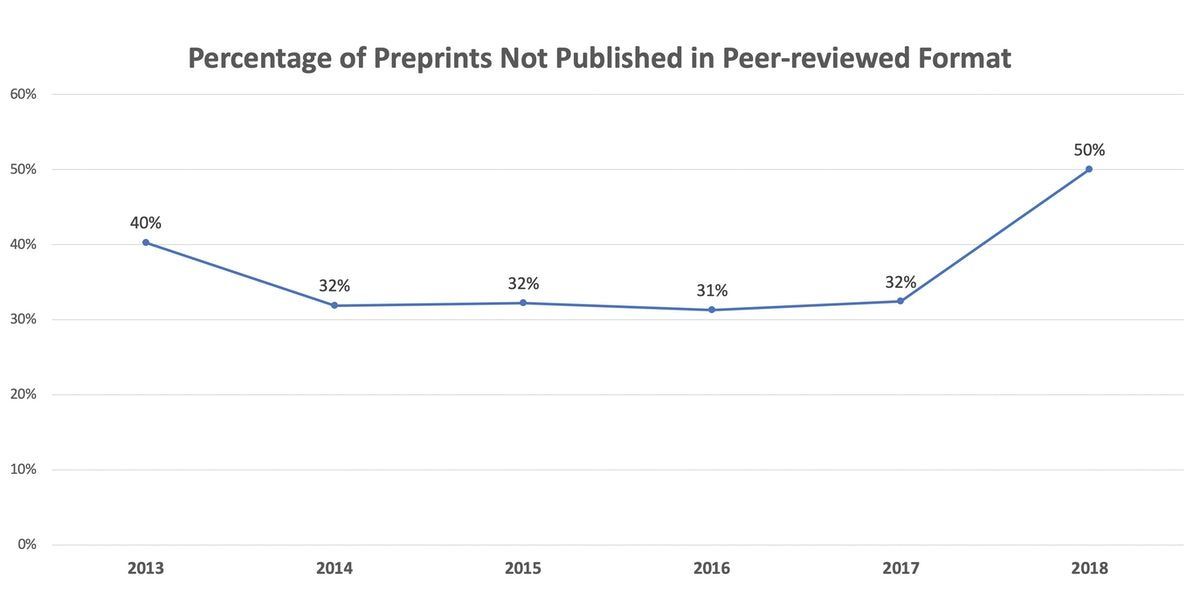

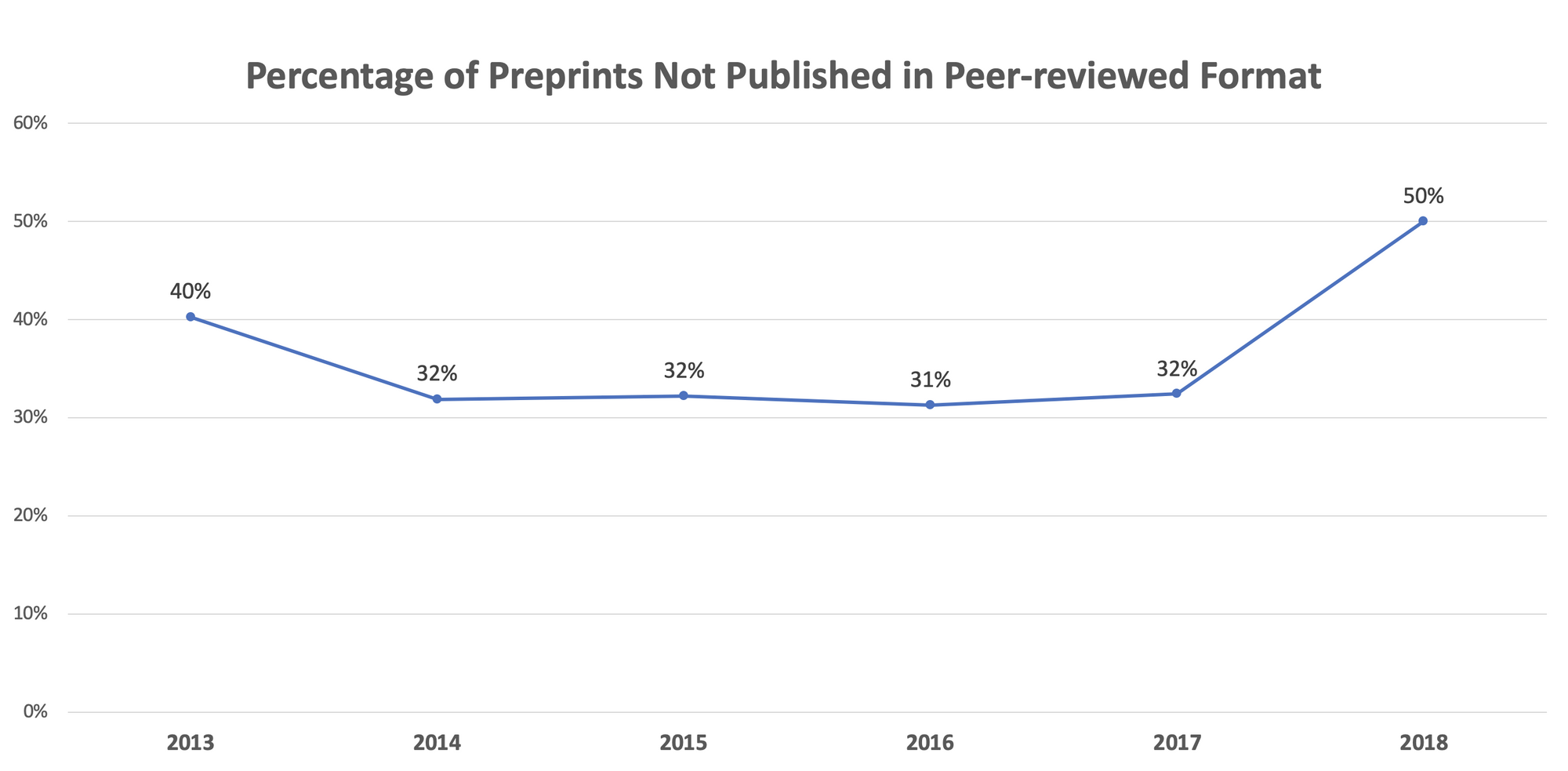

Yesterday, I published data derived from more than 37,000 posted preprints showing that preprints posted on bioRxiv consistently don’t find a peer-reviewed publication home ~32% of the time. (This followed posts about where bioRxiv preprints end up being published, and another analyzing how PLOS is doing relative to this registry of papers.)

Given bioRxiv’s inability to deliver benefits to authors by improving the overall rate of publication from the traditional rule of thumb level, it’s tempting to say, “Q.E.D.” for the 1/3 rule.

Today, I published data showing that 57% of preprints are posted after they’ve been submitted to the journal that ultimately publishes them. On average, preprint posting occurs more than a month after submission, and the time between submission and preprint posting from 2016-18 has doubled.

But what does all this mean as far as bioRxiv’s purpose and goals? Turning to their own words, bioRxiv’s stated purpose is to serve as a useful weigh station as papers move along to journals:

By posting preprints on bioRxiv, authors are able to make their findings immediately available to the scientific community and receive feedback on draft manuscripts before they are submitted to journals.

If this is the intention of bioRxiv — to give authors feedback on draft manuscripts before submission — then it appears bioRxiv isn’t doing this for the majority of authors.

Even for authors who post prior to submitting to a journal, bioRxiv is having no discernible effect. Not only is the percentage of unpublished manuscripts matching pre-existing empirical observations from experienced editors and publishers, but the percentage that go unpublished is consistent (2013 only had 77 preprints in it, and 2018 is a partial year):

If bioRxiv’s feedback mechanisms were making for better papers, you’d expect these percentages to be declining — more marginal papers would be improved by community input, and would therefore be acceptable to peer-reviewers and journal editors. But this isn’t the case — bioRxiv is having no discernible effect.

But is there a hidden effect in hosting the 1 in 3 discards? After all, 11,000-15,000 unreviewed manuscripts are now posted, with more pouring in all the time. For any random 100 manuscripts, ~32 will prove to be unpublishable, un-reviewed, and un-edited. Yet, bioRxiv is bringing this detritus to a search engine near you, allowing it to be promoted. Scientists and others promote un-reviewed bioRxiv preprints as if they’re scientific papers. It’s not hard to find examples of preprints promoted in this manner — here, here, here, and here. There’s even a way to create XML feeds of bioRxiv articles to populate pages wishing to promote unreviewed preprints.

If bioRxiv were fulfilling its purpose, I’d expect to see three different things — first, preprints un-reviewed and unpublished after 2-3 years would be deprecated so their rejection by the larger scientific community could be reflected in bioRxiv policy; second, most authors would be using it as intended prior to submission rather than as a marketing and Green OA solution after submission; and, third, you’d see a higher percentage of papers accepted for publication because they’ve been improved after being exposed to pre-publication community input.

Since we see none of these things, I think right now bioRxiv is not meeting its stated purpose.

Maybe it’s time for a rethink.

To see the full analyses of bioRxiv published this week, and the complete archives of “The Geyser,” plus future analyses, interviews, and essay, subscribe using the button below.